Introduction: The Case for a Compliance Matrix in Agentic AI

Agentic AI systems operate with a degree of autonomy that fundamentally challenges traditional compliance frameworks. Unlike conventional software, where control flows are deterministic and auditable by design, agentic systems make real-time decisions, invoke external tools, delegate tasks to sub-agents, and modify their own execution paths based on contextual reasoning. This operational model introduces compliance surface areas that existing enterprise policy frameworks were never built to address.

A policy compliance matrix provides a structured mapping between the controls an organisation requires and the controls an agentic AI deployment actually enforces. It serves three critical functions: it identifies where existing security controls extend naturally to agentic workloads, it exposes gaps where agent-specific behaviours fall outside traditional control boundaries, and it creates a shared vocabulary between security teams, compliance officers, and AI engineering groups.

Organisations deploying agentic AI without a structured compliance matrix are effectively operating with blind spots across identity management, data governance, and operational accountability — the three domains where autonomous agents introduce the most novel risk.

The matrix presented here is designed for enterprise environments operating under Australian regulatory expectations, with cross-references to international standards. It is not a static checklist but a living assessment instrument that should be revisited as agent capabilities evolve and regulatory guidance matures.

Control Domains

The compliance matrix is organised across seven control domains. Each domain contains specific controls that address the unique behaviours of agentic AI systems alongside standard enterprise security requirements.

| Control Domain | Key Controls | Agentic AI Relevance |

|---|---|---|

| Identity & Access | Agent identity registration, credential lifecycle management, least-privilege enforcement per task, session-scoped tokens, inter-agent authentication | Agents require machine identities distinct from user accounts, with permissions that adapt to task context rather than static role assignments |

| Data Protection | Input/output data classification, prompt injection filtering, context window boundary enforcement, cross-agent data leakage prevention, PII redaction in agent memory | Agents process, transform, and relay data across tool calls and sub-agents, creating data flow paths not covered by traditional DLP controls |

| Audit & Logging | Full decision-chain logging, tool invocation records, reasoning trace capture, immutable audit trails, log retention and integrity verification | Every autonomous decision, tool call, and delegation event must be traceable to reconstruct the agent's reasoning and actions post-hoc |

| Operational Resilience | Agent failover and recovery, resource consumption limits, runaway task termination, graceful degradation, dependency health monitoring | Autonomous agents can enter infinite loops, escalate resource usage, or cascade failures across interconnected agent networks |

| Model Governance | Model version registry, deployment approval workflows, performance baseline tracking, bias and drift monitoring, rollback procedures | The underlying model is the core logic engine; changes to model versions, fine-tuning, or prompt templates alter agent behaviour in ways that affect compliance posture |

| Human Oversight | Approval gates for high-risk actions, escalation triggers, override mechanisms, confidence-threshold routing, human-in-the-loop audit sampling | Regulatory frameworks increasingly mandate meaningful human oversight for automated decision-making, particularly in high-stakes domains |

| Incident Management | Agent-specific incident classification, automated containment (agent isolation, credential revocation), root cause analysis for autonomous actions, post-incident agent behavioural review | Incidents involving agentic systems require new response playbooks that account for autonomous propagation, multi-agent involvement, and non-deterministic behaviour |

Framework Mapping

Each control domain maps to established compliance frameworks. The following cross-reference table identifies the primary clauses, categories, or principles from five widely adopted standards that correspond to each domain in the matrix.

| Control Domain | ISO 27001:2022 | NIST CSF 2.0 | SOC 2 Type II | APRA CPS 234 | EU AI Act |

|---|---|---|---|---|---|

| Identity & Access | A.8.2, A.8.5 | PR.AA | CC6.1, CC6.2, CC6.3 | Paragraph 25–27 | Art. 9 (risk management) |

| Data Protection | A.8.10, A.8.11, A.8.12 | PR.DS | CC6.5, CC6.7 | Paragraph 21–23 | Art. 10 (data governance) |

| Audit & Logging | A.8.15, A.8.16 | DE.AE, DE.CM | CC7.1, CC7.2 | Paragraph 36 | Art. 12 (record-keeping) |

| Operational Resilience | A.5.29, A.5.30, A.8.14 | RC.RP, PR.IR | CC7.4, CC7.5, A1.2 | Paragraph 32–34 | Art. 15 (robustness) |

| Model Governance | A.8.9, A.8.25, A.8.32 | GV.RM, ID.RA | CC8.1 | Paragraph 15–18 | Art. 9, Art. 17 (quality management) |

| Human Oversight | A.5.8, A.5.19 | GV.OC | CC1.1, CC1.4 | Paragraph 13–14 | Art. 14 (human oversight) |

| Incident Management | A.5.24, A.5.25, A.5.26 | RS.AN, RS.MA | CC7.3, CC7.4 | Paragraph 37–39 | Art. 62 (incident reporting) |

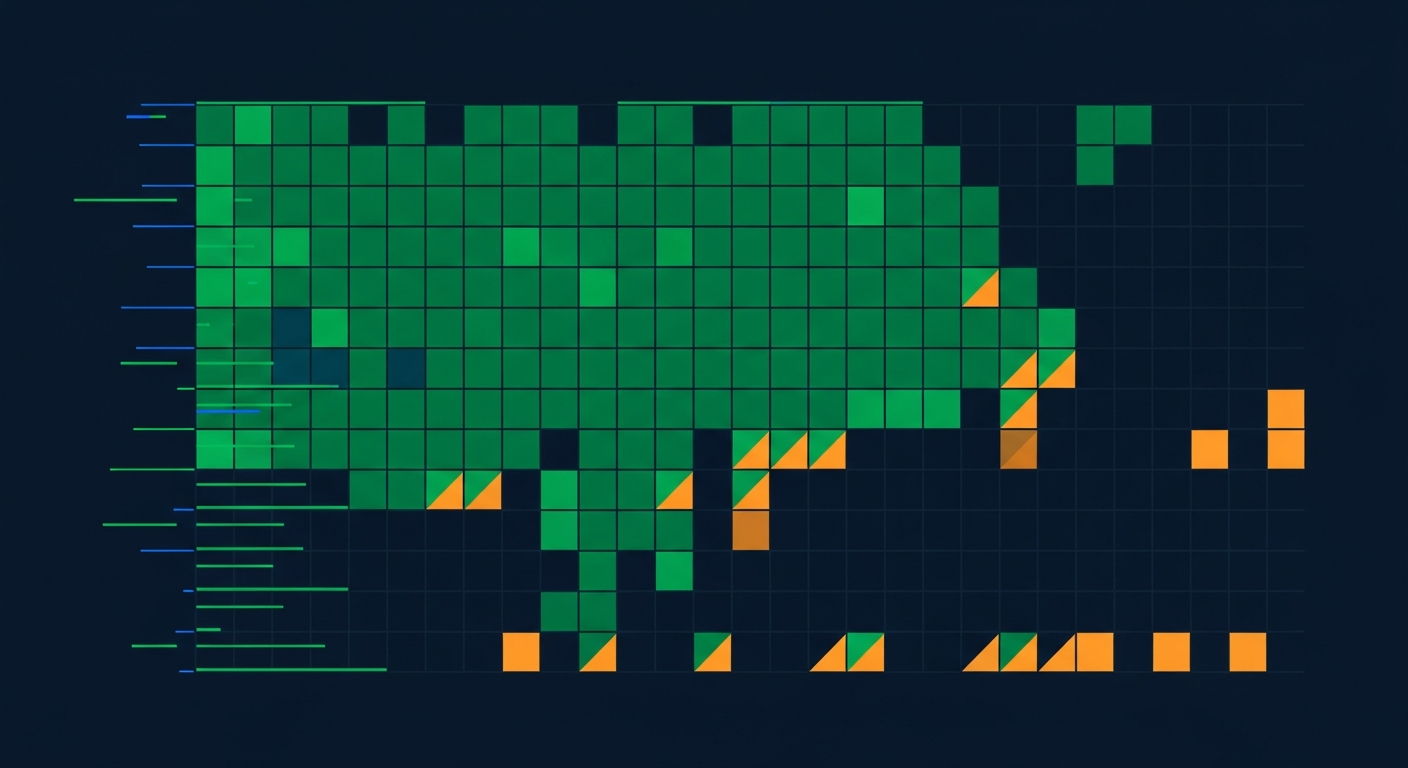

Coverage Analysis

Assessing compliance coverage requires a structured methodology that accounts for the dynamic nature of agentic systems. Each control is evaluated against three coverage levels:

| Coverage Level | Definition | Scoring Criteria | Action Required |

|---|---|---|---|

| Full | Control is implemented, tested, monitored, and evidenced for agentic workloads | Automated enforcement active; audit evidence available for last 12 months; tested against agent-specific attack scenarios | Maintain through continuous monitoring; include in periodic re-certification |

| Partial | Control exists but does not fully extend to agentic behaviours or lacks agent-specific evidence | Traditional control in place but not validated for agent identity, inter-agent flows, or autonomous decision paths; evidence gaps exist | Extend control scope to cover agentic patterns; generate agent-specific audit evidence; target full coverage within defined remediation window |

| Gap | No control addressing the requirement for agentic workloads | No policy, no technical enforcement, no monitoring for the identified risk area | Prioritise based on risk rating; implement compensating controls immediately; develop full control on remediation roadmap |

The scoring methodology proceeds in four stages. First, enumerate all controls from the matrix against the target framework. Second, for each control, collect implementation evidence specific to agentic workloads — not just traditional IT systems. Third, assign the coverage level based on the criteria above. Fourth, calculate domain-level and aggregate coverage scores as the percentage of controls at full coverage, weighted by the risk rating of each control.

A common pitfall is inheriting coverage scores from traditional IT assessments. An organisation may have full coverage for user identity management under ISO 27001 but a complete gap for agent identity management. The matrix must be assessed independently for agentic workloads.

Gap Identification: Common Deficiencies in Agentic AI Deployments

Analysis across early enterprise agentic AI deployments reveals four recurring gap patterns that most organisations encounter regardless of their existing compliance maturity.

Autonomous Action Logging

Traditional logging captures API calls and database transactions but fails to record the reasoning chain that led an agent to invoke a particular tool or take a specific action. Without decision-trace logging, it is impossible to reconstruct why an agent performed an action — only that it did. This gap directly affects compliance with ISO 27001 A.8.15 and EU AI Act Article 12, both of which require records sufficient to assess the system's functioning.

Inter-Agent Data Flows

When agents delegate tasks to sub-agents or collaborate in multi-agent architectures, data passes between agent contexts in ways that bypass traditional network-level DLP controls. Sensitive information embedded in shared context windows, tool outputs, or inter-agent messages may traverse trust boundaries without classification, filtering, or encryption. This gap is particularly acute under APRA CPS 234, which mandates controls proportionate to the criticality and sensitivity of information assets.

Dynamic Permission Changes

Agentic systems that operate with adaptive permissions — where an agent's access scope changes based on the task it is performing — create ephemeral access patterns that static access control reviews cannot capture. Quarterly access reviews, a standard SOC 2 Type II control, provide no visibility into permissions that existed for seconds during an agent's task execution and were then revoked. Organisations need real-time permission audit streams rather than periodic snapshots.

Model Version Tracking

Agent behaviour is fundamentally determined by the underlying model, prompt templates, and tool configurations. When any of these components change, the agent's compliance posture may shift. Most organisations lack a unified registry that links a specific agent action to the exact model version, prompt version, and configuration state that produced it. This makes post-incident analysis and regulatory reporting significantly more difficult.

Enterprise Requirement Alignment

The base compliance matrix must be customised to reflect the specific regulatory and operational requirements of each industry. The following table outlines key alignment considerations for three sectors with significant agentic AI adoption trajectories.

| Industry | Primary Regulatory Drivers | Matrix Customisation Focus | Elevated Controls |

|---|---|---|---|

| Financial Services | APRA CPS 234, CPS 230, ASIC RG 271, PCI DSS 4.0 | Emphasise operational resilience and third-party risk; extend incident management to cover autonomous trading or advisory actions; map agent access to financial data repositories | Dynamic permission auditing, real-time transaction monitoring for agent-initiated financial operations, board-level reporting on agent risk exposure |

| Healthcare | My Health Records Act 2012, Privacy Act 1988, TGA regulatory frameworks, NSQHS Standards | Prioritise data protection controls for patient health information; enforce strict human oversight for clinical decision support; require consent-aware data handling in agent contexts | PII/PHI redaction in all agent memory and logs, mandatory human-in-the-loop for clinical recommendations, segregated agent environments for research versus clinical data |

| Government | ISM (Information Security Manual), PSPF, Privacy Act 1988, Digital Transformation Agency guidelines | Align with ISM control classifications; enforce PROTECTED-level data handling where applicable; require Australian data residency for agent processing and logging | Security clearance-equivalent controls for agents accessing classified contexts, mandatory audit logging with sovereign storage, supply chain assurance for model providers |

Industry customisation is not merely additive. Financial services organisations may deprioritise certain healthcare-specific controls, but they must elevate controls around real-time autonomous decision-making in market-facing systems. The matrix should reflect actual risk exposure, not a superset of all possible requirements.

When customising the matrix, begin with the base seven domains, then apply an industry overlay that adjusts control weights, adds sector-specific controls, and maps to the relevant regulatory clauses. Document the rationale for any base controls that are scoped out, as auditors and regulators will expect justification for exclusions.

Continuous Compliance

Static compliance assessments are insufficient for agentic AI systems. Agent configurations change frequently, model updates alter behavioural patterns, and new tool integrations introduce fresh compliance surface areas. A continuous compliance approach requires three interconnected capabilities.

Automated Monitoring

Deploy policy-as-code engines that evaluate agent behaviour against compliance rules in real time. Every agent action — tool invocation, data access, delegation event, permission escalation — should be checked against the compliance matrix controls as it occurs. Violations trigger immediate alerts and, for high-severity controls, automated containment actions such as agent suspension or credential revocation. Monitoring should cover not only the agent itself but the entire execution context: the model endpoint, the tool APIs, the data stores accessed, and the inter-agent communication channels.

Drift Detection

Compliance drift occurs when the gap between the assessed state and the actual state widens over time. In agentic AI systems, drift is accelerated by model updates, prompt template changes, new tool integrations, and evolving agent orchestration patterns. Effective drift detection compares the current agent configuration and behavioural profile against the last certified baseline. Key drift indicators include changes in the frequency or pattern of tool invocations, shifts in data access patterns, new inter-agent communication pathways, and deviations in decision-chain complexity or depth.

Re-certification Workflows

Establish automated re-certification triggers tied to material changes in the agent environment. Rather than relying solely on calendar-based review cycles, initiate compliance re-assessment when any of the following events occur: model version updates, new tool integrations, changes to agent permission policies, significant drift detected by monitoring systems, or regulatory guidance updates. Each re-certification cycle should produce an updated compliance matrix with current coverage scores, a delta report comparing the new assessment to the previous baseline, and a remediation plan for any newly identified gaps.

| Compliance Activity | Trigger | Frequency | Output |

|---|---|---|---|

| Real-time policy enforcement | Every agent action | Continuous | Pass/fail log entries, violation alerts |

| Drift assessment | Configuration change or scheduled scan | Daily / on-change | Drift score, deviation report |

| Coverage re-scoring | Material environment change | On-trigger + quarterly minimum | Updated compliance matrix, delta report |

| Full re-certification | Annual cycle or major release | Annually + on major change | Certified compliance matrix, remediation roadmap, board-ready summary |

The policy compliance matrix is the foundation for governing agentic AI within enterprise environments. It transforms abstract regulatory requirements into concrete, measurable controls that can be assessed, monitored, and continuously improved. As agentic AI capabilities advance and regulatory frameworks mature, the matrix must evolve in step — making continuous compliance not just a best practice, but an operational necessity.